Before artificial intelligence started running complex systems and influencing everyday decisions, one question kept surfacing—can we really trust what we can’t see? As AI becomes smarter and more autonomous, ensuring that its actions remain transparent, ethical, and accountable has never been more important.

That’s where blockchain enters with real utility. With its transparent, tamper-proof records, blockchain offers a practical way to monitor and verify how AI makes decisions—without giving control to a single authority.

It replaces blind faith with verifiable truth, turning AI governance from theory into something we can actually audit and improve.

This blog post will uncover how blockchain is redefining AI governance in smart Web3 systems, bringing integrity, security, and accountability to the next generation of intelligent technologies.

Read Also: Using Artificial Intelligence for Crypto Price Prediction

Key Takeaways

- AI governance ensures that artificial intelligence systems operate transparently, fairly, and ethically, backed by global frameworks and national policies.

- Blockchain enhances AI oversight by securing data integrity, enabling decentralized control, and providing transparent audit trails for compliance.

- Real-world implementations from platforms like nChain, EQTY Lab, and Ocean Protocol demonstrate practical use of blockchain for ethical AI management.

- Key challenges in blockchain-AI integration include scalability, legal uncertainty, and ethical concerns in decentralized decision-making systems.

- The future of tech-enabled AI oversight lies in automated compliance tools, cross-border standards, decentralized infrastructure, and privacy-preserving technologies.

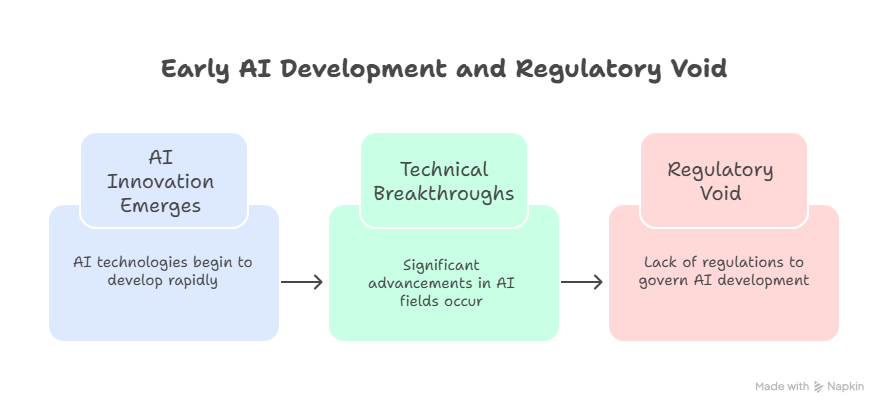

Background of AI Oversight and Regulatory Needs

The rise of artificial intelligence (AI) sparked major innovation—but also exposed serious governance gaps.

Between 2010 and 2022, the total number of AI publications nearly tripled, rising from approximately 88,000 in 2010 to more than 240,000 in 2022, driven by advancements from industry leaders like Google, Microsoft, and OpenAI. Yet, regulation lagged behind, allowing systems to operate with minimal oversight.

This led to real-world bias and ethical failures. For instance, facial recognition algorithms deployed in the U.S. and U.K. exhibited significant racial bias, with studies, such as one by the MIT Media Lab in 2018, showing error rates of up to 34.7% for darker-skinned women, compared to less than 1% for lighter-skinned men. Such disparities raised urgent ethical concerns.

These concerns were amplified by high-profile cases, such as Amazon’s scrapped AI recruiting tool that favored male candidates or COMPAS, a criminal risk-assessment tool found to be biased against Black defendants.

These incidents revealed a clear need for transparency, accountability, and fairness in AI decision-making.

The Shift Toward Structured Ethical Frameworks for AI

In response, global institutions began establishing ethical guidelines. The OECD’s Principles on AI (2019) and the EU’s Ethics Guidelines for Trustworthy AI (2019) introduced transparency, human oversight, and accountability as core standards.

The US Blueprint for an AI Bill of Rights (2022) and NIST’s AI Risk Management Framework (2023) added structure to national AI governance.

The EU AI Act (2023) moved toward enforceable regulation—classifying high-risk AI systems like predictive policing and facial recognition. Meanwhile, China advanced with its Global AI Governance Initiative and the rules on generative AI.

These developments mark a global shift from ethical intentions to binding frameworks. Yet, despite progress, trust and data integrity remain significant challenges. This is where blockchain enters the conversation, offering immutable records, decentralized accountability, and verifiable transparency to strengthen and future-proof AI governance.

What is Artificial Intelligence (AI) Governance?

Alt text: Foundation of AI governance

Now that you’ve seen the early evolution of AI and the growing need for oversight, it’s important to understand the foundation of what governs this powerful technology.

Let’s explain what AI governance means. To put it simply, AI governance refers to the frameworks, policies, and practices that ensure artificial intelligence systems are developed, deployed, and monitored in a way that aligns with human rights, ethical values, safety, and legal standards.

It covers everything from transparency and accountability to bias mitigation, privacy protection, and risk management.

AI governance isn’t a single law or policy; it’s a collective effort that spans governments, international organizations, private companies, and research institutions.

It guides how decisions are made in AI systems, how outcomes are audited, and how responsibilities are assigned—ultimately building trust in how AI operates in society.

Core Principles of Effective AI Oversight

The following core principles form the backbone of effective AI governance across global standards and best practices:

Transparency and Accountability in AI Models

Transparency involves making AI systems understandable, explainable, and auditable. This means stakeholders, including users, developers, and regulators, should be able to trace how an AI system processes inputs, makes decisions, and delivers outcomes.

“For example, the EU AI Act requires high-risk AI systems to provide clear documentation, testing results, and human oversight protocols.”

Accountability goes hand-in-hand with transparency. Organizations must take full responsibility for the impact of their AI models. This includes documenting design decisions, data sources, and training processes, ensuring that when things go wrong, there is a clear path for investigation and redress.

Ensuring Fairness and Reducing Algorithmic Bias

AI systems can reinforce existing inequalities if not properly designed and tested. Fairness means ensuring that models do not discriminate based on race, gender, age, or other protected characteristics.

“A 2020 IBM study found that over 85% of AI professionals reported bias in their models at some point during development, highlighting how widespread this issue remains.”

Effective governance frameworks promote the use of diverse, representative datasets and rigorous bias testing. Tools like IBM’s AI Fairness 360 and Google’s What-If Tool help developers assess and mitigate unfair outcomes, ensuring equitable treatment for all user groups.

Prioritizing Safety, Security, and Risk Management

AI models, especially those operating in critical sectors, must be safe and secure. This includes protection against model failures, adversarial attacks, and unintended behaviors. Safety also ensures that AI systems are robust, perform reliably across different environments, and include built-in controls to prevent harmful outputs.

“The NIST AI Risk Management Framework (2023) provides structured guidance for identifying and mitigating potential risks throughout the AI lifecycle.”

Safety audits, incident response protocols, and red-teaming exercises are becoming integral to responsible AI development.

Respecting User Privacy and Protecting Sensitive Data

Privacy remains one of the most sensitive and regulated areas in AI governance. AI systems often rely on large volumes of personal data for training and operation. Without strict safeguards, this can lead to misuse, data leaks, or violations of privacy rights.

Regulations like the General Data Protection Regulation (GDPR) in the EU set high standards for consent, data minimization, and user rights. Techniques such as differential privacy, federated learning, and data anonymization are essential tools to balance AI performance with individual privacy protections.

Aligning AI with Human Values and Ethics

Finally, effective oversight ensures that AI systems align with broader human values, such as dignity, autonomy, fairness, and justice. This principle encourages the development of AI that enhances human well-being rather than replacing or harming it.

Ethical AI design means involving multidisciplinary teams—ethicists, sociologists, and legal experts—early in the development process. It also means setting clear boundaries on applications that could violate fundamental rights, such as autonomous lethal weapons or mass surveillance tools.

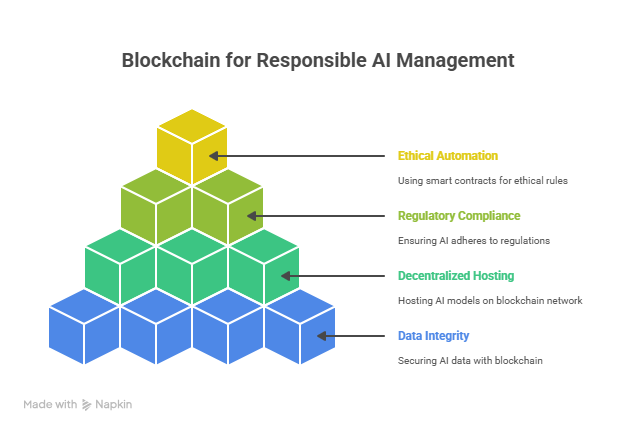

Blockchain’s Role in Responsible AI Management

Blockchain technology plays a critical role in advancing responsible AI governance by enhancing transparency, accountability, and control through decentralized infrastructure and tamper-proof systems.

Below are the key ways blockchain contributes to responsible AI management:

Securing AI Data Integrity and Audit Trails

AI systems are only as good as the data they learn from, and compromised or unverified data can lead to flawed models, biased decisions, or outright harm. Blockchain secures the integrity of training and operational data by recording every transaction, dataset entry, and model update on an immutable ledger.

This means no single party can alter or delete data without leaving a trace, which significantly improves data provenance and trust in the AI lifecycle.

For example, in the healthcare sector, companies like BurstIQ use blockchain to manage and verify patient data for AI-driven diagnostics. With data immutably recorded on the blockchain, healthcare AI tools can train on verified, high-quality datasets, minimizing the risk of manipulation or corruption.

Decentralized AI Infrastructure and Model Hosting

Traditional AI relies on centralized platforms and servers, creating single points of failure, ownership issues, and increased risk of censorship or abuse.

In contrast, blockchain enables decentralized AI model hosting, where machine learning models are distributed across multiple nodes on a peer-to-peer network. This approach promotes transparency, democratizes control, and reduces reliance on big tech companies.

Projects like Ocean Protocol and SingularityNET exemplify this model. Ocean Protocol allows data providers and AI developers to share and monetize data and models securely through a blockchain-based marketplace.

SingularityNET, led by the creators of the Sophia robot, enables developers to publish, share, and collaborate on AI services in a decentralized network using smart contracts and tokens.

This infrastructure is crucial for Web3 ecosystems, where trust is not placed in a single organization but encoded into decentralized protocols. It also improves resilience by distributing computation and storage—making AI systems less vulnerable to attacks or censorship.

Supporting Regulatory Compliance and Traceability

One of the biggest challenges in AI governance is proving compliance with legal and ethical standards. Blockchain’s core feature—a tamper-resistant, time-stamped record of transactions—supports full traceability and regulatory compliance across the AI supply chain.

With blockchain, organizations can maintain detailed logs of:

- Who accessed or modified a model or dataset

- When changes were made

- What specific parameters or data were used for training

This level of transparency is essential for audits, especially under frameworks like the EU AI Act, which demands extensive documentation for high-risk AI systems. For instance, the European Blockchain Services Infrastructure (EBSI) is piloting the use of distributed ledgers to improve public sector transparency, including AI auditing.

Moreover, blockchain helps track AI model versions and decisions over time—providing digital fingerprints for each model iteration. This traceability is essential not only for liability and redress but also for ensuring accountability in dynamic, self-learning AI environments.

Smart Contracts for Automated Ethical Rules

Smart contracts are self-executing agreements encoded on a blockchain. When applied to AI, they can enforce predefined ethical or operational rules, automatically halting or approving actions based on compliance with established criteria.

For example, a smart contract could:

- Block an AI system from accessing sensitive data if the user hasn’t given consent

- Pause a model’s deployment if bias exceeds a certain threshold during testing

- Enforce licensing rules in decentralized AI marketplaces

Projects like Fetch.ai and Prove AI AG are working on integrating AI agents with smart contracts to automate decision-making and governance.

In Prove AI’s ecosystem, AI models are embedded with cryptographic proofs and governed by rule-based smart contracts that verify compliance with standards in real time.

This approach is especially useful in autonomous AI systems, such as those in self-driving cars or decentralized finance (DeFi), where human oversight is limited or infeasible. Smart contracts ensure that models operate within ethical and legal boundaries by design, not just by policy.

Real-World Examples and Case Studies of AI Governance in Blockchain

Here are case studies that demonstrate how blockchain is actively supporting responsible AI development:

nChain: Securing AI Operations with Immutable Data

nChain, a blockchain technology company known for its work on the Bitcoin SV blockchain, focuses on securing AI workflows by providing immutable data storage and digital evidence trails.

Its platform enables organizations to register and timestamp every phase of the AI lifecycle, from data sourcing and preprocessing to training and deployment, on a public blockchain ledger.

This approach helps to:

- Prevent tampering or unauthorized alterations in datasets

- Establish proof of integrity for model training inputs and outcomes

- Create forensic-grade audit logs for compliance and legal verification

nChain’s solution aligns particularly well with the NIST AI Risk Management Framework and the upcoming EU AI Act, which mandate complete transparency in AI development pipelines.

By anchoring every process to the blockchain, nChain makes it possible to provide evidence-based accountability, a critical element for regulators and high-risk sectors like finance, health, and defense.

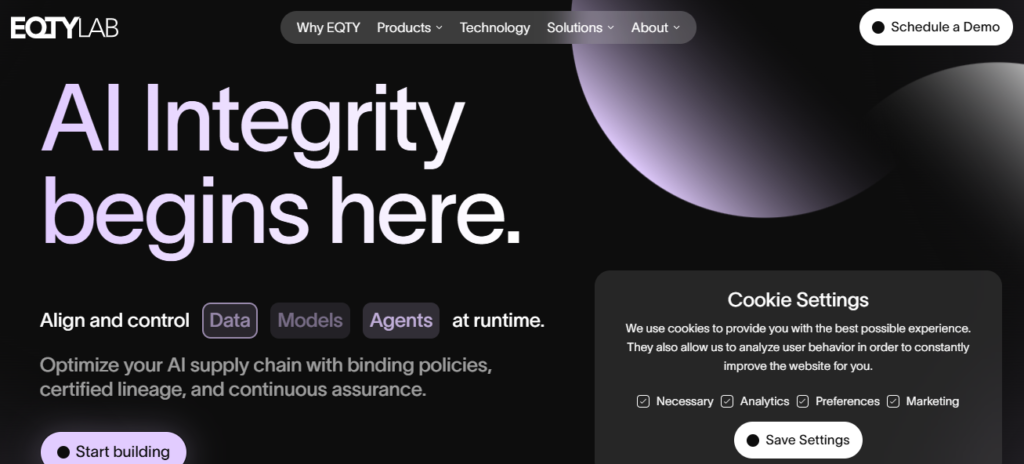

EQTY Lab: Tokenizing Ethical AI Compliance

EQTY Lab is an emerging platform that focuses on incentivizing ethical AI development through blockchain tokenization. The company offers a compliance-as-a-service platform where AI projects can be evaluated based on predefined ethical standards and then rewarded or penalized using digital tokens.

Key features include:

- Tokenized attestations for compliance with principles such as fairness, explainability, and privacy

- A blockchain-based scoring system to assess and rank AI models based on ethical indicators

- Smart contract enforcement of ethical commitments, making ethical claims verifiable on-chain

Through EQTY’s model, developers stake tokens to prove their commitment to responsible AI. If they breach ethical thresholds—e.g., fail a bias test or violate privacy standards—the smart contract enforces penalties, and the community is notified via a decentralized audit log. This approach promotes self-regulation at scale while also aligning incentives for good behavior.

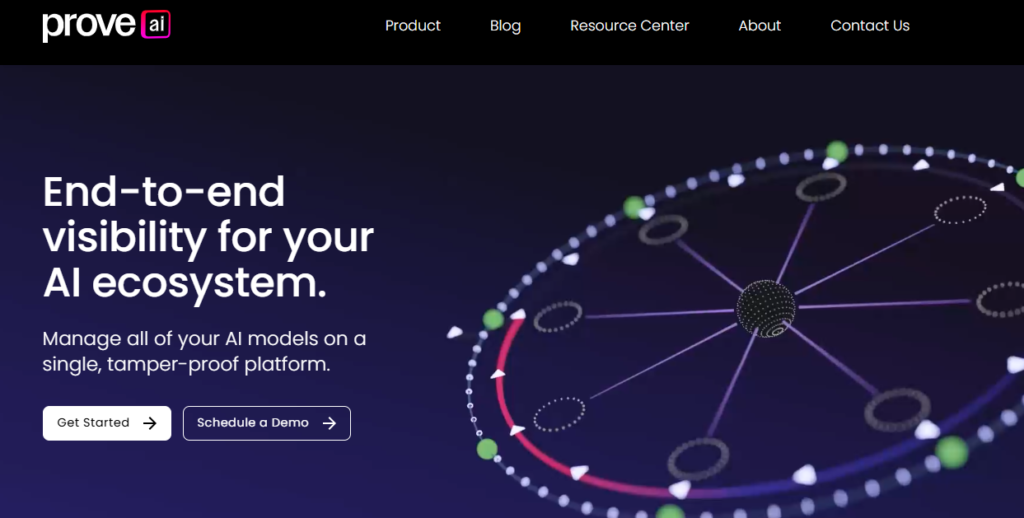

Prove AI AG: Decentralized Verification of AI Algorithms

Based in Switzerland, Prove AI AG specializes in cryptographic proofs for AI model compliance. The company leverages zero-knowledge proofs (ZKPs) and distributed ledger technology (DLT) to allow AI developers and users to verify algorithm behavior without exposing proprietary models or sensitive data.

Prove AI’s governance layer includes:

- Decentralized registries of algorithm behavior and historical performance

- Real-time verification of AI outputs against ethical and legal constraints

- Integration of smart contracts to automatically block AI functions that breach compliance

For example, in a cross-border healthcare application, Prove AI enabled hospitals to verify that a diagnostic AI system complied with data handling laws like GDPR, without needing to access the training data or source code directly. Their blockchain-stored compliance certificates are regularly audited by independent validators on the network.

Ocean Protocol: Decentralized Data Sharing for Ethical AI

Ocean Protocol is a leading example of how blockchain can foster ethical data sharing for AI development.

Built on Ethereum, Ocean offers a decentralized data marketplace where individuals, companies, and institutions can share or monetize datasets while maintaining full control over privacy and permissions.

Key AI governance contributions include:

- Use of data tokens to manage access and traceability

- On-chain licensing terms that enforce ethical usage (e.g., no resale, non-commercial use)

- Compute-to-data architecture, allowing AI training without direct data exposure

Ocean Protocol is particularly useful in sensitive industries like climate science, health, and mobility, where high-quality data is needed for AI training but privacy must be preserved.

For instance, in collaboration with the German Federal Ministry of Education and Research, Ocean has supported responsible AI innovation in mobility systems by enabling data sharing across municipalities.

Ocean’s framework ensures that AI systems trained on their marketplace data are auditable, permissioned, and compliant—bridging a key gap in current AI governance models.

SingularityNET: Decentralized AI Services on the Blockchain

SingularityNET, founded by Dr. Ben Goertzel and known for powering the Sophia robot, is a decentralized marketplace for AI services running on the Cardano blockchain.

The platform enables developers to deploy, monetize, and connect AI models across a decentralized infrastructure, while embedding governance controls directly into the network.

Features relevant to AI governance include:

- Smart contract-enforced service terms (e.g., non-discriminatory outputs, uptime guarantees)

- Decentralized reputation systems that help users assess AI service quality and ethics

- Use of AGIX tokens to facilitate payments, access control, and governance voting

SingularityNET’s ecosystem includes use cases in natural language processing, biomedical research, robotics, and financial forecasting.

By distributing ownership and enforcing usage rules via blockchain, the platform minimizes centralized control and promotes transparent, collaborative AI development.

Protecting IP and Proprietary AI Assets with Blockchain

As artificial intelligence becomes central to competitive advantage in sectors like healthcare, finance, manufacturing, and defense, protecting intellectual property (IP) tied to AI models, training datasets, and algorithms has never been more critical.

These assets represent significant investment and innovation, but traditional IP protection methods often fall short in fast-moving digital environments.

Blockchain technology offers a modern, decentralized solution for safeguarding proprietary AI assets by providing verifiable ownership, immutable audit trails, and transparent licensing frameworks.

How Distributed Ledgers Help Safeguard AI Innovations

Distributed ledger technology (DLT) enables AI developers and organizations to store ownership records, model metadata, and licensing agreements on an immutable, decentralized ledger.

Unlike centralized databases that are vulnerable to hacking, tampering, or insider threats, blockchain provides a tamper-proof system where every transaction is permanently recorded and verifiable by all parties.

By anchoring AI development logs, version history, and user access to a blockchain:

- AI creators can verify the origin and authorship of a model or dataset

- Unauthorized changes or replications can be detected immediately

- Legal disputes over model ownership, usage rights, or licensing terms are easier to resolve with immutable evidence

Timestamping and Proof-of-Ownership for Algorithmic IP

One of blockchain’s most powerful features in IP protection is timestamping—the ability to record a piece of digital content (e.g., code, algorithm, model weights) at a specific point in time and prove that it existed then, without revealing its details publicly.

This mechanism creates a digital fingerprint, or hash, of the asset, which is stored immutably on-chain. The original asset doesn’t need to be published; only its cryptographic signature is recorded. This allows developers to:

- Prove prior invention in case of patent or copyright disputes

- Defend against theft or misattribution by competitors

- Register and license AI models on-chain with traceable terms

Platforms like nChain, Provenance, and IPFS/Filecoin provide infrastructure for such timestamping and decentralized storage.

A developer building a proprietary recommendation algorithm, for example, can create a hash of the code and register it on a blockchain. If another party claims to own or license the same IP, the original timestamp provides a legal anchor for dispute resolution.

Moreover, this capability supports automatic royalties and licensing enforcement. Smart contracts can trigger payments to model creators whenever their IP is used or accessed, enabling AI to be licensed as easily as digital music or art.

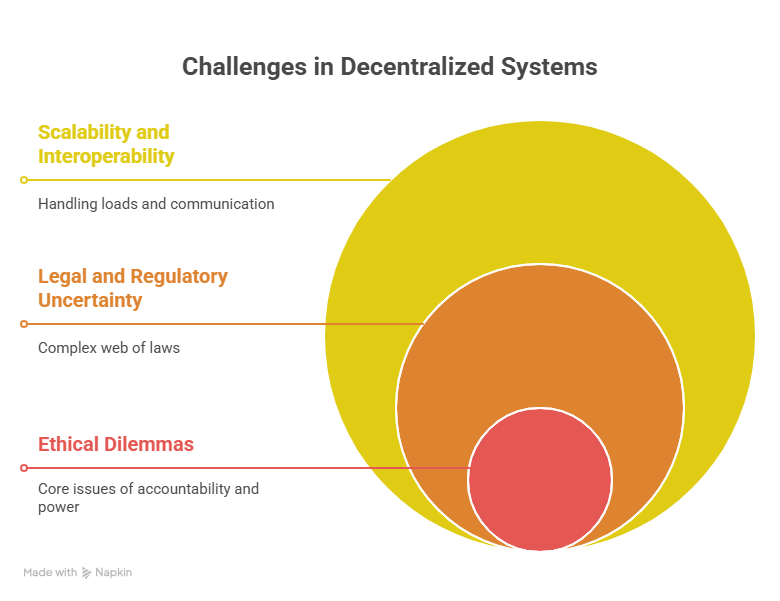

Challenges in Blockchain-AI Integration

While the convergence of blockchain and artificial intelligence offers promising solutions for trust, transparency, and accountability, it also introduces several technical, legal, and ethical challenges.

As organizations and developers work to merge these powerful technologies into scalable, responsible systems, they must address the following key issues:

Scalability and Interoperability Issues

Scalability is a major hurdle for both AI and blockchain, individually and especially when integrated.

AI systems require high-speed data processing, low-latency computations, and real-time responsiveness, whereas blockchain networks, particularly public chains such as Ethereum and Bitcoin, often struggle with limited transaction throughput and high energy consumption.

For instance, Ethereum 1.0 processes about 15–30 transactions per second (TPS), while centralized systems like Visa handle over 24,000 TPS.

AI models, particularly those involving deep learning, may require thousands of operations per second to serve real-time applications, making full on-chain model execution impractical.

While Layer-2 solutions, sharding, and proof-of-stake mechanisms have improved blockchain performance, these technologies are still maturing.

Running AI models off-chain and anchoring key events on-chain (as seen in Ocean Protocol’s “compute-to-data” architecture) is a practical workaround, but it adds complexity and cost.

Interoperability poses another barrier. Different blockchain platforms (e.g., Ethereum, Cardano, and Hyperledger) use incompatible consensus protocols, smart contract languages, and data standards, making it difficult for AI systems to operate across multiple networks.

Without seamless interoperability, the benefits of decentralized AI governance, such as cross-platform traceability and universal ethical enforcement, remain limited.

Legal and Regulatory Uncertainty

Despite growing attention from global regulators, the legal status of many blockchain and AI applications remains unclear, creating compliance risks for developers and organizations.

Blockchain-related concerns include:

- Whether smart contracts are legally enforceable across jurisdictions

- How data protection laws (e.g., GDPR’s right to be forgotten) apply to immutable ledgers

- Who is held responsible in decentralized networks, particularly in DAO-governed AI systems

AI regulations, meanwhile, are still in development. The EU AI Act, the U.S. Algorithmic Accountability Act, and China’s AI governance rules all differ in scope, terminology, and enforcement mechanisms. Integrating AI with blockchain raises unique legal questions, such as:

- How to certify or audit AI models that evolve over time (e.g., through machine learning)

- How to assign liability in autonomous or decentralized systems

- Whether AI-generated outcomes, like smart contract decisions, can be challenged in court

For instance, if an AI system embedded in a smart contract makes a discriminatory lending decision, is the creator, the contract deployer, or the network operator responsible? Without clear legal guidance, these questions remain unresolved, stalling wider adoption in regulated industries.

Ethical Dilemmas in Decentralized Governance

Decentralization promotes transparency and democratization, but it also introduces ethical complexities that are hard to resolve without centralized control or oversight.

Governance in blockchain-AI systems often relies on community voting, token-based incentives, and autonomous decision-making—all of which can conflict with ethical AI principles.

Key concerns include:

- Majority rule vs. minority rights: In tokenized governance systems, decisions can favor majority token holders, potentially sidelining marginalized groups.

- Opacity of AI behavior: Even when governance is decentralized, AI models may still behave like “black boxes,” making it hard for communities to make informed ethical decisions.

- Lack of accountability mechanisms: In fully decentralized AI ecosystems, assigning responsibility becomes difficult, especially if the model causes harm or violates ethical norms.

For example, DAO-controlled AI systems might deploy automated agents without any human oversight, raising questions about how and when to intervene if the system behaves unfairly or dangerously.

Ethical frameworks that rely on continuous human supervision, context-specific judgment, and adaptive standards can be difficult to encode in smart contracts or enforce via decentralized governance alone. This creates tension between technological autonomy and moral responsibility.

Future of Tech-Enabled AI Oversight

As artificial intelligence continues to evolve and influence critical decisions across industries, the need for more effective, technology-driven governance systems becomes increasingly urgent.

Traditional oversight methods, such as manual audits, paper-based compliance, and reactive regulation, are no longer sufficient in a world where AI models self-learn, adapt, and operate in real time.

The future of AI oversight will be proactive, automated, and deeply integrated with technologies like blockchain, IoT, federated learning, and smart contracts.

AI Oversight Will Be Automated and Embedded

Future oversight systems will be built into the AI pipeline itself, rather than layered on afterward. This means using automated monitoring tools that continuously track model performance, flag anomalies, and verify compliance with ethical, legal, and operational standards.

For instance, advanced AI governance platforms may:

- Automatically pause or update a model if bias exceeds pre-set thresholds

- Send alerts when model decisions deviate from expected norms

- Generate real-time compliance reports for regulators and stakeholders

Tools like ModelOps and ML observability platforms are early indicators of this shift, offering real-time visibility into how AI systems behave in production environments.

Decentralization Will Reinforce Trust and Accountability

Technologies like blockchain and decentralized identity (DID) will play a larger role in enhancing transparency and trust in AI systems. By anchoring training datasets, model versions, and decision logs on tamper-proof ledgers, organizations can build systems that are verifiable by default.

This approach allows for:

- Immutable audit trails of model development and deployment

- Traceable accountability across supply chains and data ecosystems

- Permissioned access and automated enforcement of ethical usage rules through smart contracts

Projects like Ocean Protocol, nChain, and Prove AI AG are already laying the groundwork for such decentralized, transparent AI ecosystems.

Cross-Border Standards and Interoperability Will Gain Importance

AI governance will increasingly become a global effort. As countries implement their own AI laws, such as the EU AI Act, China’s AI guidelines, and the U.S. AI Bill of Rights, the lack of interoperability between these frameworks poses challenges for international companies.

In the future, expect to see:

- The rise of global AI compliance standards similar to the ISO in manufacturing

- Interoperable policy layers embedded into governance tech stacks

- Efforts to align data privacy, bias evaluation, and safety assurance across jurisdictions

The OECD AI Policy Observatory and UNESCO’s AI ethics recommendation are already working toward harmonizing global frameworks.

Ethical Governance Will Be Driven by Multi-Stakeholder Collaboration

As AI systems grow in complexity, no single entity, whether a company, government, or NGO, can effectively govern them alone. Future AI oversight will require collaboration across technologists, regulators, ethicists, civil society, and users.

This will lead to:

- Participatory governance models, where affected communities help define ethical thresholds

- AI ethics boards that include cross-functional and cross-cultural expertise

- Expansion of regulatory sandboxes to test AI innovations in controlled environments

Such collaborative models will help ensure that AI governance reflects societal values and adapts to cultural diversity rather than imposing rigid, one-size-fits-all rules.

Privacy-Preserving Technologies Will Enhance Oversight Without Compromising Data

Future oversight systems must ensure AI governance that respects data privacy, particularly in sensitive sectors such as healthcare, finance, and national security.

This is where privacy-preserving technologies like:

- Federated learning

- Differential privacy

- Homomorphic encryption

will become essential. These tools allow oversight entities to audit, monitor, and enforce rules without direct access to personal or proprietary data—preserving both trust and compliance.

Final Thoughts

AI doesn’t govern itself, and it shouldn’t. As systems grow more autonomous, embedding accountability into their core is no longer optional. Blockchain offers more than just technical support; it introduces mechanisms for transparency, traceability, and control that AI urgently needs.

Real progress will come from building systems where auditability, fairness, and security are enforced by design, not by exception. That means aligning technology with intent and architecture with ethics.

Integrating blockchain into AI governance isn’t a final step—it’s the infrastructure for what’s next: verifiable, human-aligned intelligence that earns trust at scale.

Now is the time to build that foundation, deliberately and transparently.

Frequently Asked Questions

How Is AI Used in the Blockchain?

AI is used in blockchain to optimize smart contracts, detect fraud, automate decision-making, enhance security, and analyze blockchain data for insights and predictive modeling.

What Is Governance in Blockchain?

Governance in blockchain refers to the processes, rules, and mechanisms that guide decision-making, updates, and dispute resolution within a blockchain network, often involving stakeholders like developers, node operators, and token holders.

Will AI Replace Blockchain?

No, AI will not replace blockchain; they serve different purposes and are increasingly used together to enhance transparency, security, and automation.

How To Combine Blockchain With AI?

Combine blockchain with AI by using decentralized ledgers to store and verify AI data, smart contracts to enforce rules, and cryptographic tools to secure model integrity and ensure transparent, accountable decision-making.

How To Build AI Governance?

To build AI governance, define ethical principles, implement transparent and auditable processes, ensure legal compliance, engage multi-stakeholder input, and integrate technologies like blockchain for traceability and accountability.