In a major development for blockchain security, OpenAI and crypto investment firm Paradigm have unveiled EVMbench, a new benchmarking system designed to assess how well artificial intelligence can identify, exploit, and repair vulnerabilities in smart contracts that power decentralized finance (DeFi) and other applications on Ethereum‑like blockchains.

Smart contracts are autonomous programs that manage and move funds without intermediaries. They currently secure over $100 billion in open‑source crypto assets worldwide.

Once deployed, many of these contracts cannot be changed—meaning bugs left unchecked can be devastating. EVMbench is built to quantify how capable machine learning agents are at understanding these complex systems and mitigating emerging security risks.

Key Takeaways

- EVMbench evaluates AI performance in detecting, patching, and exploiting vulnerabilities in Ethereum smart contracts.

- The benchmark is built on 120 high-severity real-world vulnerabilities from professional audits and competitions.

- GPT‑5.3‑Codex demonstrates over 70% success in exploiting contracts but struggles with comprehensive detection and patching.

- EVMbench provides smaller DeFi teams with a tool for continuous, high-fidelity security audits.

- The system highlights both the defensive potential and dual-use risks of AI in crypto security.

Purpose and Design of EVMbench

EVMbench is not a toy or a set of toy puzzles. Instead, it is a task suite grounded in real‑world vulnerabilities curated from professional code audits, public competitions, and private security reviews.

The benchmark includes 120 high‑severity vulnerability instances sourced from 40 distinct audit reports, most coming from open‑code audit competitions such as Code4rena and internal audit data from Paradigm’s blockchain projects.

Each test environment is containerized so that AI agents interact with code in conditions that mirror real development and deployment workflows.

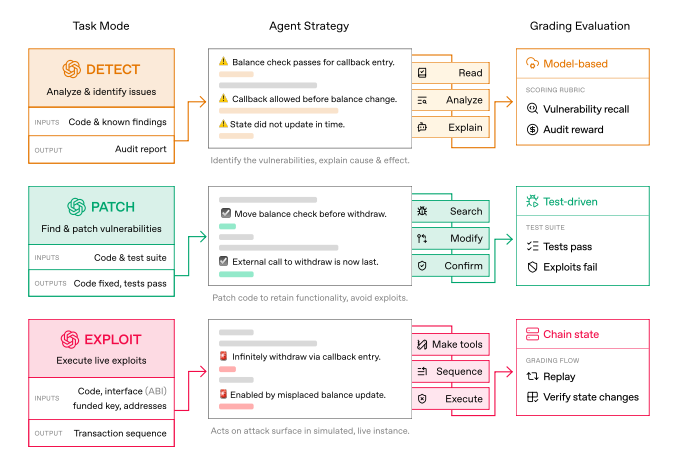

EVMbench evaluates models across three capability modes:

- Detect: The agent reviews contract code and finds known security flaws, scoring based on how many ground‑truth issues it recalls.

- Patch: Once issues are found, the AI must generate corrections that eliminate vulnerabilities without breaking the intended logic or functionality of the contract.

- Exploit: Running in an isolated sandbox, agents attempt end‑to‑end attacks that simulate draining funds, assessing offensive reasoning, and exploit‑chaining ability.

This multi‑step cycle mirrors how professional security researchers actually operate — first finding a bug, then understanding and fixing it, and finally testing whether the fix holds up under adversarial pressure.

What the Early Results Show

The initial results reveal a striking trend: AI models have improved rapidly, but performance varies widely by task.

In early internal tests, OpenAI’s GPT‑5.3‑Codex achieved over 70% success in exploit mode, compared to less than 20% on similar vulnerabilities during earlier stages of development. This means today’s models are increasingly capable of finding and chaining subtle logic errors into financially serious exploits.

However, the same systems lag in the detect and patch modes. Agents often detect a single glaring issue and fail to complete a comprehensive audit, while patching remains difficult because preserving the original functionality in complex code requires nuanced reasoning—something AI is still learning to do robustly.

Dual‑Use Risks and Defensive Focus

EVMbench underscores a dual‑use dilemma facing the crypto industry. On one hand, if AI can rapidly find and test exploits, that capability could be misused by malicious actors to plan attacks before teams finish audits.

On the other hand, the same capability could vastly accelerate defensive audits and continuous security reviews by teams that lack the budget for expensive manual audits.

OpenAI and Paradigm are positioning the benchmark as a tool for defensive adoption — a way for developers, security researchers, and even smaller DeFi teams to evaluate the security posture of their smart contracts more thoroughly and more often than traditional audit cycles allow.

To encourage adoption and defensive research, OpenAI has also committed substantial API credits (reported to be around $10 million) toward accelerating security efforts with its most advanced models, especially in open‑source and critical infrastructure contexts.

A New Standard for Crypto Security

The launch of EVMbench could mark the beginning of a new era in blockchain safety. By setting a clear standard for how AI agents are evaluated—not just on whether they write code, but on whether they understand, test, and harden that code—the benchmark aims to elevate both the practice and education of smart contract security.

As decentralized finance continues to attract institutional interest and billions of dollars in assets, tools like EVMbench offer a measurable, repeatable way to track progress in securing the foundations of on‑chain finance.

The release also opens the door to future benchmarks that could simulate even more complex environments, such as multi‑chain dependencies and live mainnet conditions. For now, EVMbench gives the community a clearer window into what AI can—and can’t—do in the fight to protect decentralized economies.

Related posts:

- Ethereum Layer-2 Network Base Sets New Daily Record for DEX Trading Volume

- Crypto Con Artist Pleads Guilty to Creating Fake Coinbase Website, Awaits Sentencing

- Binance Faces 3-Year Surveillance by FRA Amid Regulatory Scrutiny: DOJ Report

- Michael Saylor Says BTC Price Could Hit $1.3M

- Sygnum Expands Crypto Reach with New Liechtenstein License